Dear QF Community,

This is a special post following Anthropic’s recently published report, which outlines what it describes as large scale “distillation” campaigns targeting its Claude models.

According to the report, three Chinese AI labs, namely DeepSeek, Moonshot AI and MiniMax, generated more than 16 million exchanges with Claude using approximately 24,000 accounts, allegedly in violation of Anthropic’s terms of service and regional access restrictions.

In a nutshell, distillation is a standard and widely used machine learning technique in which a smaller model is trained on the outputs of a stronger one. Frontier labs routinely use it internally to produce smaller, cheaper, and more efficient versions of their own systems.

The issue arises when the same technique is used without authorisation. In that context, distillation can become a means of replicating advanced capabilities without incurring the full research, compute, and data costs required to develop them independently.

Anthropic argues that the campaigns it identified were not typical API usage, but rather structured efforts to extract model capabilities at scale. According to the report, the patterns observed included:

High volume, repetitive prompt structures

Coordinated activity across large numbers of accounts

Targeted elicitation of reasoning traces and tool use behaviours

Proxy-based “hydra cluster” traffic distribution to evade detection

Anthropic attributes specific areas of focus to each lab:

DeepSeek: Over 150,000 exchanges targeting reasoning capabilities, rubric based grading tasks, and the generation of step by step reasoning traces.

Moonshot AI: Over 3.4 million exchanges focused on agentic reasoning, coding, data analysis, and computer use agents.

MiniMax: Over 13 million exchanges centred on agentic coding and tool orchestration, including rapid traffic shifts following new model releases.

Anthropic also states that its attribution was based on IP correlation, request metadata, infrastructure indicators, and corroboration from industry partners.

Economic and National Security Implications

The report highlights an asymmetry inherent in API based frontier models. Labs invest billions in compute infrastructure and data pipelines to train large foundation models. However, if competitors are able to extract high quality outputs at scale, they may compress development timelines and reduce costs through distillation.

Anthropic also frames distillation as a national security concern. The company argues that models derived through illicit distillation may not retain the original safety safeguards and could therefore be deployed in ways the originating lab would not permit.

The report links this issue to export controls, suggesting that distillation may weaken policy mechanisms designed to preserve competitive and technological advantages. In Anthropic’s view, restricting access to advanced chips could limit both direct model training and the scale at which large scale distillation efforts can occur.

Defensive Measures

Anthropic outlines several countermeasures that are now in place:

Detection classifiers and behavioural fingerprinting systems

Monitoring for coordinated account activity

Detection of chain of thought elicitation patterns

Intelligence sharing with other AI labs and cloud providers

Stronger verification requirements for account types commonly used to establish access

The company emphasises that no single organisation can fully address the issue on its own and calls for coordinated responses across both industry and policymakers.

Zero-Knowledge Proofs for “Proof of Training”

At Quantum Formalism (QF), we believe the industry may eventually move toward some form of Zero Knowledge Proofs (ZKPs), as a mechanism for “Proof of Training.”

In simple terms, a Zero Knowledge Proof allows one party to demonstrate that a statement is true without revealing the underlying data. More specifically, Zero Knowledge Succinct Non Interactive Arguments of Knowledge, known as ZK SNARKs, make it possible to generate compact, publicly verifiable proofs that a particular computation was carried out.

For those who prefer a more concrete and relatable analogy, imagine Pepsi announcing that it has invented an entirely new soft drink formula that just happens to taste remarkably similar to Coca Cola’s flagship product.

In an ordinary commercial setting, Pepsi could simply state that it developed the formula independently. However, if the stakes were significantly higher, involving intellectual property disputes, regulatory scrutiny, or even national trade restrictions, a verbal assurance would not be enough. The central question would then become clear: how can Pepsi prove that it did not reverse engineer or copy Coca Cola’s formula without revealing its own secret recipe?

This is precisely the type of problem Zero Knowledge Proofs are designed to address. They allow a party to demonstrate that a specific process was genuinely carried out, without disclosing the confidential inputs behind it. In the context of AI, the equivalent would be proving that a model was trained through a full, independent computational process, without exposing proprietary datasets, architectural details, or internal training logs.

How could this apply to AI?

The idea is that an AI lab claiming to have independently trained a frontier model could generate a cryptographic proof that it executed a specified training process, such as:

Running a training loop over a defined architecture

Consuming a dataset of defined scale (without revealing its contents)

Expending a verifiable compute budget

Producing a resulting set of model weights derived from that process

The proof would demonstrate that the required computation was performed, without exposing proprietary datasets, training data composition, or internal model details. In theory, verification could be public and lightweight, while the underlying data remains confidential.

We will publish a more detailed follow-up on this idea. For now, it is important to acknowledge the significant technical challenges involved, including:

Current ZK systems are computationally expensive, and applying them to full-scale AI training would introduce substantial overhead.

Stochastic gradient descent introduces randomness and non-determinism that would need to be reconciled with deterministic proof systems.

Proving dataset characteristics (e.g., scale, entropy, or provenance commitments) without revealing sensitive data is technically complex.

Any “Proof of Training” standard would only be effective if widely adopted across industry participants.

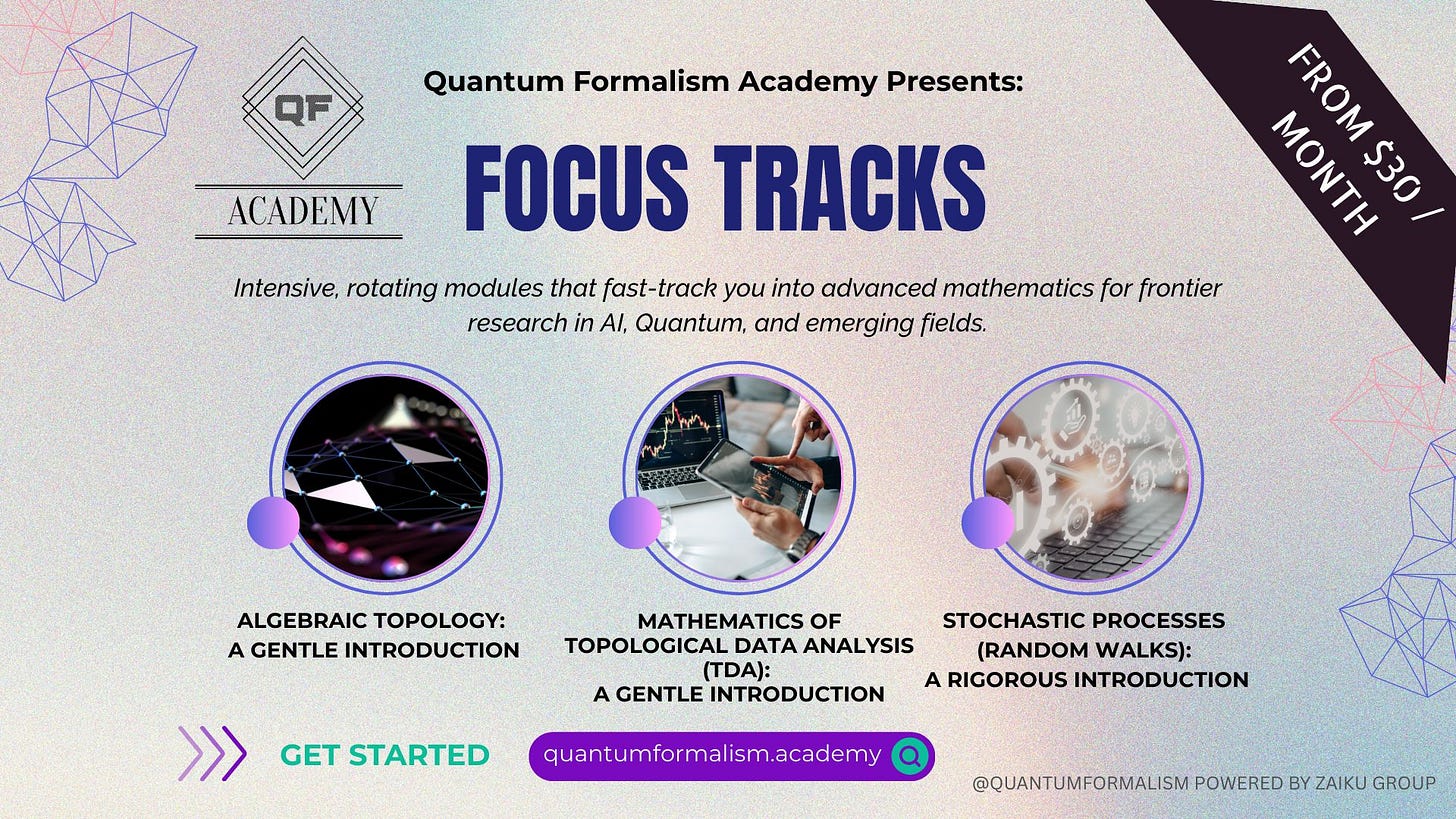

The concept may appear promising from a cryptographic standpoint. However, translating it into practical infrastructure for frontier AI would require meaningful advances in verifiable computation, systems design, and industry coordination. In our view, the first two areas will likely demand deeper mathematical innovation and new theoretical breakthroughs, particularly in the kinds of advanced mathematics we explore at QF Academy.

A podcast narration version of this post will also be uploaded to Spotify (here) and YouTube (here).

Wishing you a wonderful rest of the week.

Quantum Formalism (QF) team