Dear QF Community,

This is a special episode focusing on Moltbot, Moltbook, and the broader safety challenges that arise when AI systems are deployed in distributed, multi-agent settings.

As many of you may already be aware, Moltbot, recently renamed OpenClaw, is a new consumer-facing AI tool that has gained rapid attention over the past two weeks. Developed as an open-source project by its founder, Peter Steinberger, it is positioned as a personal AI assistant designed to help users automate everyday tasks and interact more fluidly with digital systems through agent-based workflows. Rather than focusing on a single narrow capability, OpenClaw emphasises orchestration, allowing tasks to be delegated across tools and processes in a way that reflects a broader shift toward agent-centric software design.

What has made Moltbot particularly notable is not just its functionality, but the ecosystem that has formed around it, including Moltbook, a social platform where large numbers of agents appear to interact, post content, and build reputation in shared spaces.

The recent attention surrounding Moltbook, including public discussions about its security weaknesses and the ease with which agents could be impersonated or manipulated, has brought into focus a much deeper set of questions. These are not simply questions about implementation quality or isolated security oversights, but about what happens when AI agents are allowed to coordinate, communicate, and operate collectively without robust mechanisms for identity, trust, oversight, and accountability.

Another aspect that drew the attention of security researchers is the “move fast and break things” philosophy that appears to underpin the project, particularly as reflected in a statement the founder made during a recent interview. In describing his development process, he explained:

I Ship Code I Don’t Read. I have a vision for how the system should work, and I rely on AI to implement it.

In this episode, we use Moltbot and Moltbook as a concrete entry point into a wider discussion about AI safety in distributed environments. Drawing on recent research into multi-agent systems and distributional approaches to AI safety, we explore how risks shift once intelligence and agency become properties of a network rather than of a single model. We discuss why traditional, model-centric safety frameworks struggle in these settings, how vulnerabilities can propagate through agent networks, and why governance, security, and incentive design need to be treated as first-class components of any agentic ecosystem.

Our goal is not to single out any one project, but to use a timely and visible example to examine the structural challenges that are likely to become more common as agent-based systems continue to proliferate. As such, Moltbot and Moltbook are best understood not as anomalies, but as early signals of a transition toward more distributed forms of AI, where safety, coordination, and trust must be addressed at the system level rather than after the fact.

Credits & References:

A podcast narration version of this post will also be uploaded to Spotify (here) and YouTube (here).

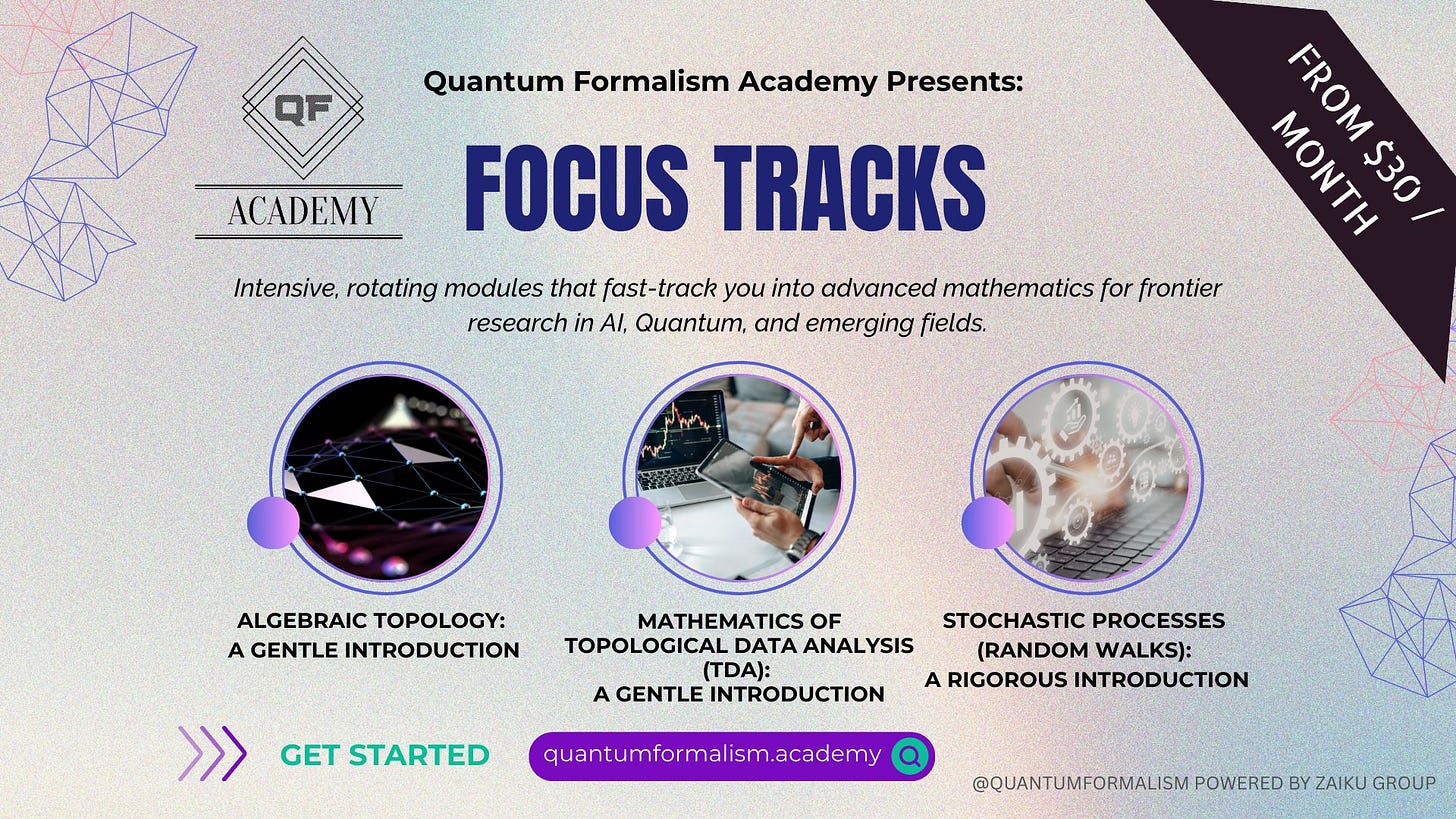

If you find our content useful, you may want to consider supporting it by becoming a paid Substack member. Paid subscribers get access to additional material, including extended analysis, bonus episodes, and early previews of upcoming content, and, just as importantly, your support helps us continue to produce careful, independent work on topics that deserve more than surface-level treatment.

Wishing you a wonderful rest of the week ahead.

QF Academy team